You are currently browsing the category archive for the ‘Theory of Numbers’ category.

Why primality is polynomial time, but factorisation is not

Differentiating between the signature of a number and its value

A brief review: The significance of evidence-based reasoning

In a paper: `The truth assignments that differentiate human reasoning from mechanistic reasoning: The evidence-based argument for Lucas’ Gödelian thesis’, which appeared in the December 2016 issue of Cognitive Systems Research [An16], I briefly addressed the philosophical challenge that arises when an intelligence—whether human or mechanistic—accepts arithmetical propositions as true under an interpretation—either axiomatically or on the basis of subjective self-evidence—without any specified methodology for objectively evidencing such acceptance in the sense of Chetan Murthy and Martin Löb:

“It is by now folklore … that one can view the values of a simple functional language as specifying evidence for propositions in a constructive logic …” … Chetan. R. Murthy: [Mu91], \S 1 Introduction.

“Intuitively we require that for each event-describing sentence, say (i.e. the concrete object denoted by

exhibits the property expressed by

), there shall be an algorithm (depending on I, i.e.

) to decide the truth or falsity of that sentence.” … Martin H Löb: [Lob59], p.165.

Definition 1 (Evidence-based reasoning in Arithmetic): Evidence-based reasoning accepts arithmetical propositions as true under an interpretation if, and only if, there is some specified methodology for objectively evidencing such acceptance.

The significance of introducing evidence-based reasoning for assigning truth values to the formulas of a first-order Peano Arithmetic, such as PA, under a well-defined interpretation (see Section 3 in [An16]), is that it admits the distinction:

(1) algorithmically verifiable `truth’ (Definition 2}); and

(2) algorithmically computable `truth’ (Definition 3).

Definition 2 (Deterministic algorithm): A deterministic algorithm computes a mathematical function which has a unique value for any input in its domain, and the algorithm is a process that produces this particular value as output.

Note that a deterministic algorithm can be suitably defined as a `realizer‘ in the sense of the Brouwer-Heyting-Kolmogorov rules (see [Ba16], p.5).

For instance, under evidence-based reasoning the formula of the first-order Peano Arithmetic PA must always be interpreted weakly under the classical, standard, interpretation of PA (see [An16], Theorem 5.6) in terms of algorithmic verifiability (see [An16], Definition 1); where, if the PA-formula

interprets as an arithmetical relation

over

:

Definition 2 (Algorithmic verifiability): The number-theoretical relation is algorithmically verifiable if, and only if, for any natural number

, there is a deterministic algorithm

which can provide evidence for deciding the truth/falsity of each proposition in the finite sequence

.

Whereas must always be interpreted strongly under the finitary interpretation of PA (see [An16], Theorem 6.7) in terms of algorithmic computability ([An16], Definition 2), where:

Definition 3 (Algorithmic computability): The number theoretical relation is algorithmically computable if, and only if, there is a deterministic algorithm

that can provide evidence for deciding the truth/falsity of each proposition in the denumerable sequence

.

The significance of the distinction between algorithmically computable reasoning based on algorithmically computable truth, and algorithmically verifiable reasoning based on algorithmically verifiable truth, is that it admits the following, hitherto unsuspected, consequences:

(i) PA has two well-defined interpretations over the domain of the natural numbers (including

):

(a) the weak non-finitary standard interpretation ([An16], Theorem 5.6),

and

(b) a strong finitary interpretation ([An16], Theorem 6.7);

(ii) PA is non-finitarily consistent under ([An16], Theorem 5.7);

(iii) PA is finitarily consistent under ([An16], Theorem 6.8).

The significance of evidence-based reasoning for Computational Complexity

In this investigation I now show the relevance of evidence-based reasoning, and of distinguishing between algorithmically verifiable and algorithmically computable number-theoretic functions (as defined above), for Computational Complexity is that it assures us a formal foundation for placing in perspective, and complementing, an uncomfortably counter-intuitive entailment in number theory—Theorem 2 below—which has been treated by conventional wisdom as sufficient for concluding that the prime divisors of an integer cannot be proven to be mutually independent.

However, I show there that such informally perceived barriers are, in this instance, illusory; and that admitting the above distinction illustrates:

(a) Why the prime divisors of an integer are mutually independent Theorem 2;

(b) Why determining whether the signature (Definition 3 below) of a given integer —coded as the key in a modified Bazeries-cylinder (see Definition 7 of this paper) based combination lock—is that of a prime, or not, can be done in polynomial time

(Corollary 4 of this paper); as compared to the time

given by Agrawal et al in [AKS04], and improved to

by Lenstra and Pomerance in [LP11], for determining whether the value of a given integer

is that of a prime or not.

(c) Why it can be cogently argued that determining a factor of a given integer cannot be polynomial time.

Definition 4 (Signature of a number): The signature of a given integer is the sequence

where

for all primes

.

Unique since, if and

have the same signature, then

; whence

since

for

by appeal to Bertrand’s Postulate

; and the uniqueness is easily verified for

.

Definition 5 (Value of a number): The value of a given integer is any well-defined interpretation—over the domain of the natural numbers—of the (unique) numeral

that represents

in the first-order Peano Arithmetic PA.

We note that Theorem 2 establishes a lower limit for [AKS04] and [LP11], because determining the signature of a given integer does not require knowledge of the value of the integer as defined by the Fundamental Theorem of Arithmetic.

Theorem 1: (Fundamental Theorem of Arithmetic): Every positive integer can be represented in exactly one way as a product of prime powers:

where are primes and the

are positive integers (including

).

Are the prime divisors of an integer mutually independent?

In this paper I address the query:

Query 1: Are the prime divisors of an integer mutually independent?

Definition 6 (Independent events): Two events are independent if the occurrence of one event does not influence (and is not influenced by) the occurrence of the other.

Intuitively, the prime divisors of an integer seem to be mutually independent by virtue of the Fundamental Theorem of Arithmetic

Moreover, the prime divisors of can also be seen to be mutually independent in the usual, linearly displayed, Sieve of Eratosthenes, where whether an integer

is crossed out as a multiple of a prime

is obviously independent of whether it is also crossed out as a multiple of a prime

:

1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19 …

Despite such compelling evidence, conventional wisdom appears to accept as definitive the counter-intuitive conclusion that although we can see it as true, we cannot mathematically prove the following proposition as true:

Proposition 1: Whether or not a prime divides an integer

is independent of whether or not a prime

divides the integer

.

We note that such an unprovable-but-intuitively-true conclusion makes a stronger assumption than that in Gödel’s similar claim for his arithmetical formula —whose Gödel-number is

—in [Go31], p.26(2). Stronger, since Gödel does not assume his proposition to be intuitively true, but shows that though the arithmetical formula with Gödel-number

is not provable in his Peano Arithmetic

yet, for any

-numeral

, the formula

whose Gödel-number is

is

-provable, and therefore meta-mathematically true under any well-defined Tarskian interpretation of

(cf., [An16], Section 3.).

Expressed in computational terms (see [An16], Corollary 8.3), under any well-defined interpretation of , Gödel’s formula

translates as an arithmetical relation, say

, such that

is algorithmically verifiable, but not algorithmically computable, as always true over

, since

is

-provable ([An16], Corollary 8.2).

We thus argue that a perspective which denies Proposition 1 is based on perceived barriers that reflect, and are peculiar to, only the argument that:

Theorem 2: There is no deterministic algorithm that, for any given , and any given prime

, will evidence that the probability

that

divides

is

, and the probability

that

does not divide

is

.

Proof By a standard result in the Theory of Numbers ([Ste02], Chapter 2, p.9, Theorem 2.1, we cannot define a probability function for the probability that a random is prime over the probability space

.

(Compare with the informal argument in [HL23], pp.36-37.)

In other words, treating Theorem 2 as an absolute barrier does not admit the possibility—which has consequences for the resolution of outstanding problems in both the theory of numbers and computational complexity—that Proposition 1 is algorithmically verifiable, but not algorithmically computable, as true, since:

Theorem 3: For any given , there is a deterministic algorithm that, given any prime

, will evidence that the probability

that

divides

is

, and the probability

that

does not divide

is

.

(Notations, non-standard concepts, and definitions used commonly in these investigations are detailed in this post.)

A: A conventional perspective on why the prime divisors of an integer are not mutually independent

In a post Getting to the Roots of Factoring on the blog Gödel’s Lost Letter and P = NP (hosted jointly by him and Professor of Computer Science at Georgia Tech College of Computing, Richard Lipton) Professor of Computer Science and Engineering at the University of Buffalo, Kenneth W. Regan `revisits’ a 1994 paper on factoring by his co-host.

What I find intriguing in the above post is that the following extract subsumes, almost incidentally, a possibly implicit belief which may be inhibiting not only a resolution of the computational complexity of Integer Factoring (see this investigation) by conventional methods but, for similar reasons, also resolution of a large class of apparently unrelated problems (see, for instance, this investigation and this previous post):

“Here is the code of the algorithm. … the input is a product of two prime numbers,

is a polynomial in just one variable, and

refers to the greatest-common-divisor algorithm expounded by Euclid around 300 B.C. …

Repeat until exit:

a random number in

;

;

if

then exit.

Exiting enables carrying out the two prime factors of …

How many iterations must one expect to make through this maze before exit? How and when can the choice of the polynomial speed up the exploration? …

Note that we cannot consider the events and

to be independent, even though

and

are prime, because

and

may introduce bias.”

The following reasoning shows why it is not obvious whether the assertion that:

“… we cannot consider the events and

to be independent”

is intended to narrowly reflect a putative proof in the particular context of the assertion, or to echo a more general belief.

B: Defining the probability that a given integer is divisible by a given smaller integer

(1) We consider the questions:

(a) What is the probability for any given and

, where

, that:

?

(b) What is the compound probability for any given , where

,

, and

, that:

, and

?

(c) What is the compound probability for any given , where

,

, and

, that:

divides

, and

divides

?

C: Why the prime divisors of an integer are mutually independent

(2) We note that:

(a) The answer to query (1a) above is that the probability the roll of an -sided cylindrical die will yield the value

is

by the probability model for such an event as definable over the probability space

;

(b) The answer to query (1b) above is that the probability the simultaneous roll of one -sided cylindrical die and one

-sided cylindrical die will yield the values

and

, respectively, is

by the probability model for such a simultaneous event as defined over the probability space

.

(3) We trivially conclude that:

The compound probability of determining and

correctly from the simultaneous roll of one

-sided cylindrical die and one

-sided cylindrical die, is the product of the probability of determining

correctly from the roll of an

-sided cylindrical die, and the probability of determining

correctly from the roll of a

-sided cylindrical die.

(4) We further conclude non-trivially that the answer to query (1c) above is given by:

(a) If and

are co-prime, the compound probability of correctly determining that

divides

and

divides

from the simultaneous roll of one

-sided cylindrical die and one

-sided cylindrical die, is the product of the probability of correctly determining that

divides

from the roll of an

-sided cylindrical die, and the probability of correctly determining that

divides

from the roll of a

-sided cylindrical die.

(b) The assumption that and

be co-prime is also necessary, since 4(a) would not always be the case if

and

were not co-prime.

For instance, let . The probability that an

-sided cylindrical die will then yield

—and allow us to conclude that

divides

—is

, and the probability that a

-sided cylindrical die will then yield

—and allow us to conclude that

divides

—is

; but the probability of determining both that

divides

, and that

divides

, from a simultaneous roll of the two cylindrical dice is

, and not

.

(5) We thus conclude non-trivially that, if and

are two unequal primes, the probability of determining whether

divides

is independent of the probability of determining whether

divides

.

(Notations, non-standard concepts, and definitions used commonly in these investigations are detailed in this post.)

A: The twin prime conjecture

In the post Notes on the Bombieri asymptotic sieve on his blog What’s new, 2006 Fields Medal awardee and Professor of Mathematics at UCLA, Terence Tao, writes and comments that:

“The twin prime conjecture, still unsolved, asserts that there are infinitely many primes such that

is also prime. A more precise form of this conjecture is (a special case) of the Hardy-Littlewood prime tuples conjecture, which asserts that:

… (1)

as , where

is the von Mangoldt function and

is the twin prime constant:

.

Because is almost entirely supported on the primes, it is not difficult to see that (1) implies the twin prime conjecture.

One can give a heuristic justification of the asymptotic (1) (and hence the twin prime conjecture) via sieve theoretic methods. …

It may also be possible that the twin prime conjecture could be proven by non-analytic means, in a way that does not lead to significantly new estimates on the sum (though this sum will of course have to go to infinity as

if the twin prime conjecture holds).”

B: Why heuristic approaches to the twin prime conjecture may not suffice

1. What seems to prevent a non-heuristic determination of the limiting behaviour of prime counting functions is that the usual approximations of for finite values of

are apparently derived from real-valued functions which are asymptotic to

, such as

,

and Riemann’s function

.

2. The degree of approximation for finite values of is thus determined only heuristically, by conjecturing upon an error term in the asymptotic relation that can be seen to yield a closer approximation than others to the actual values of

.

3. Moreover, currently, conventional approaches to evaluating prime counting functions for finite may also subscribe to the belief:

(i) either—explicitly (see here)—that whether or not a prime divides an integer

is not independent of whether or not a prime

divides the integer

;

(ii) or—implicitly (since the twin-prime problem is yet open)—that a proof to the contrary must imply that if is the probability that

is a prime, then

.

4. If so, then conventional approaches seem to conflate the two probabilities:

(i) The probability of selecting a number that has the property of being prime from a given set

of numbers;

Example 1: I have a bag containing numbers in which there are twice as many composites as primes. What is the probability that the first number you blindly pick from it is a prime. This is the basis for setting odds in games such as roulette.

(ii) The probability of determining that a given integer

is prime.

Example 2: I give you a -digit combination lock along with a

-digit number

. The lock only opens if you set the combination to a proper factor of

which is greater than

. What is the probability that the first combination you try will open the lock. This is the basis for RSA encryption, which provides the cryptosystem used by many banks for securing their communications.

5. In case 4(i), if the precise proportion of primes to non-primes in is definable, then clearly

too is definable.

However if is the set

of all integers, and we cannot define a precise ratio of primes to composites in

, but only an order of magnitude such as

, then equally obviously

cannot be defined in

(see Chapter 2, p.9, Theorem 2.1, here).

6. In case 4(ii) it follows that , since we can show (see Corollary 2.9 on p.14 of this investigation) that whether or not a prime

divides a given integer

is independent of whether or not a prime

divides

.

7. We thus have that . Hence, even though we cannot define the probability

of selecting a number from the set

of all natural numbers that has the property of being prime,

can be treated as the de facto probability that a given

is prime.

8. Moreover, by considering the asymptotic density of the set of all integers that are not divisible by the first primes

we can show that, for any

, the expected number of such integers in any interval of length

is:

.

9. We can then show that a non-heuristic approximation for the number of primes less than or equal to is given for all

by:

for some constant .

10. We can show, similarly, that the expected number of Dirichlet and twin primes in the interval () can be estimated similarly; and conclude that the number of such primes

is, in each case, cumulatively approximated non-heuristically by a function that

.

11. The method can, moreover, be generalised to apply to a large class of prime counting functions (see Section 5.A on p.22 of this investigation).

C: A non-heuristic proof that there are infinitely many twin primes

1. In particular, instead of estimating:

heuristically by analytic considerations, one could also estimate the number of twin primes

non-heuristically as:

where is the de facto probability that a given

is prime; and where we need to allow for the possibility that the two probabilities may not be independent.

2. One way of approaching this would be to define an integer as a

integer if, and only if,

and

for all

, where

is defined for all

by:

3. Note that if is a

integer, then both

and

are not divisible by any of the first

primes

.

4. The asymptotic density of integers over the set of natural numbers is then:

.

5. Further, if is a

integer, then

is a prime and either

is also a prime, or

.

6. If we define as the number of

integers

, the expected number of

integers in any interval

is given by:

7. Since is at most one less than the number of twin-primes in the interval

, it follows that:

8. Now, the expected number of integers in the interval

is given by:

.

9. We conclude that the number of twin primes

is given by the cumulative non-heuristic approximation:

.

(Notations, non-standard concepts, and definitions used commonly in these investigations are detailed in this post.)

We investigate whether the probabilistic distribution of prime numbers can be treated as a heuristic model of quantum behaviour, since it too can be treated as a quantum phenomena, with a well-defined binomial probability function that is algorithmically computable, where the conjectured values of differ from actual values with a binomial standard deviation, and where we define a phenomena as a quantum phenomena if, and only if, it obeys laws that can only be represented mathematically by functions that are algorithmically verifiable, but not algorithmically computable.

1. Thesis: The concept of ‘mathematical truth’ must be accountable

The thesis of this investigation is that a major philosophical challenge—which has so far inhibited a deeper understanding of the quantum behaviour reflected in the mathematical representation of some laws of nature (see, for instance, this paper by Eamonn Healey)—lies in holding to account the uncritical acceptance of propositions of a mathematical language as true under an interpretation—either axiomatically or on the basis of subjective self-evidence—without any specified methodology of accountability for objectively evidencing such acceptance.

2. The concept of ‘set-theoretical truth’ is not accountable

Since current folk lore is that all scientific truths can be expressed adequately, and communicated unambiguously, in the first order Set Theory ZF, and since the Axiom of Infinity of ZF cannot—even in principle—be objectively evidenced as true under any putative interpretation of ZF (as we argue in this post), an undesirable consequence of such an uncritical acceptance is that the distinction between the truths of mathematical propositions under interpretation which can be objectively evidenced, and those which cannot, is not evident.

3. The significance of such accountability for mathematics

The significance of such a distinction for mathematics is highlighted in this paper due to appear in the December 2016 issue of Cognitive Systems Research, where we address this challenge by considering the two finitarily accountable concepts of algorithmic verifiability and algorithmic computability (first introduced in this paper at the Symposium on Computational Philosophy at the AISB/IACAP World Congress 2012-Alan Turing 2012, Birmingham, UK).

(i) Algorithmic verifiability

A number-theoretical relation is algorithmically verifiable if, and only if, for any given natural number

, there is an algorithm

which can provide objective evidence for deciding the truth/falsity of each proposition in the finite sequence

.

(ii) Algorithmic computability

A number theoretical relation is algorithmically computable if, and only if, there is an algorithm

that can provide objective evidence for deciding the truth/falsity of each proposition in the denumerable sequence

.

(iii) Algorithmic verifiability vis à vis algorithmic computability

We note that algorithmic computability implies the existence of an algorithm that can decide the truth/falsity of each proposition in a well-defined denumerable sequence of propositions, whereas algorithmic verifiability does not imply the existence of an algorithm that can decide the truth/falsity of each proposition in a well-defined denumerable sequence of propositions.

From the point of view of a finitary mathematical philosophy—which is the constraint within which an applied science ought to ideally operate—the significant difference between the two concepts could be expressed by saying that we may treat the decimal representation of a real number as corresponding to a physically measurable limit—and not only to a mathematically definable limit—if and only if such representation is definable by an algorithmically computable function (Thesis 1 on p.9 of this paper that was presented on 26th June at the workshop on Emergent Computational Logics at UNILOG’2015, 5th World Congress and School on Universal Logic, Istanbul, Turkey).

We note that although every algorithmically computable relation is algorithmically verifiable, the converse is not true.

We show in the CSR paper how such accountability helps define finitary truth assignments that differentiate human reasoning from mechanistic reasoning in arithmetic by identifying two, hitherto unsuspected, Tarskian interpretations of the first order Peano Arithmetic PA, under both of which the PA axioms interpret as finitarily true over the domain of the natural numbers, and the PA rules of inference preserve such truth finitarily over

.

4. The ambit of human reasoning vis à vis the ambit of mechanistic reasoning

One corresponds to the classical, non-finitary, putative standard interpretation of PA over , and can be treated as circumscribing the ambit of human reasoning about ‘true’ arithmetical propositions.

The other corresponds to a finitary interpretation of PA over that circumscibes the ambit of mechanistic reasoning about ‘true’ arithmetical propositions, and establishes the long-sought for consistency of PA (see this post); which establishes PA as a mathematical language of unambiguous communication for the mathematical representation of physical phenomena.

5. The significance of such accountability for the mathematical representation of physical phenomena

The significance of such a distinction for the mathematical representation of physical phenomena is highlighted in this paper that was presented on 26th June at the workshop on Emergent Computational Logics at UNILOG’2015, 5th World Congress and School on Universal Logic, Istanbul, Turkey, where we showed how some of the seemingly paradoxical elements of quantum mechanics may resolve if we define:

Quantum phenomena: A phenomena is a quantum phenomena if, and only if, it obeys laws that can only be represented mathematically by functions that are algorithmically verifiable but not algorithmically computable.

6. The mathematical representation of quantum phenomena that is determinate but not predictable

By considering the properties of Gödel’s function (see

4.1 on p.8 of this preprint)—which allows us to strongly represent any non-terminating sequence of natural numbers by an arithmetical function—it would follow that, since any projection of the future values of a quantum-phenomena-associated, algorithmically verifiable, function is consistent with an infinity of algorithmically computable functions, all of whose past values are identical to the algorithmically verifiable past values of the function, the phenomena itself would be essentially unpredicatable if it cannot be represented by an algorithmically computable function.

However, since the algorithmic verifiability of any quantum phenomena shows that it is mathematically determinate, it follows that the physical phenomena itself must observe determinate laws.

7. Such representation does not need to admit multiverses

Hence (contrary to any interpretation that admits unverifiable multiverses) only one algorithmically computable extension of the function is consistent with the law determining the behaviour of the phenomena, and each possible extension must therefore be associated with a probability that the next observation of the phenomena is described by that particular extension.

8. Is the probability of the future behaviour of quantum phenomena definable by an algorithmically computable function?

The question arises: Although we cannot represent quantum phenomena explicitly by an algorithmically computable function, does the phenomena lend itself to an algorithmically computable probability of its future behaviour in the above sense?

9. Can primes yield a heuristic model of quantum behaviour?

We now show that the distribution of prime numbers denoted by the arithmetical prime counting function is a quantum phenomena in the above sense, with a well-defined probability function that is algorithmically computable.

10. Two prime probabilities

We consider the two probabilities:

(i) The probability of selecting a number that has the property of being prime from a given set

of numbers;

Example 1: I have a bag containing numbers in which there are twice as many composites as primes. What is the probability that the first number you blindly pick from it is a prime. This is the basis for setting odds in games such as roulette.

(ii) The probability of determining a proper factor of a given number

.

Example 2: I give you a -digit combination lock along with a

-digit number

. The lock only opens if you set the combination to a proper factor of

which is greater than

. What is the probability that the first combination you try will open the lock. This is the basis for RSA encryption, which provides the cryptosystem used by many banks for securing their communications.

11. The probability of a randomly chosen number from the set of natural numbers is not definable

Clearly the probability of selecting a number that has the property of being prime from a given set

of numbers is definable if the precise proportion of primes to non-primes in

is definable.

However if is the set

of all integers, and we cannot define a precise ratio of primes to composites in

, but only an order of magnitude such as

, then equally obviously

cannot be defined in

(see Chapter 2, p.9, Theorem 2.1, here).

12. The prime divisors of a natural number are independent

Now, the following paper proves , since it shows that whether or not a prime

divides a given integer

is independent of whether or not a prime

divides

:

Why Integer Factorising cannot be polynomial time

We thus have that , with a binomial standard deviation.

Hence, even though we cannot define the probability of selecting a number from the set

of all natural numbers that has the property of being prime,

can be treated as the putative non-heuristic probability that a given

is a prime.

13. The distribution of primes is a quantum phenomena

The distribution of primes is thus determinate but unpredictable, since it is representable by the algorithmically verifiable but not algorithmically computable arithmetical number-theoretic function , where

is the

‘th prime.

The Prime Number Generating Theorem and the Trim and Compact algorithms detailed in this 1964 investigation illustrate why the arithmetical number-theoretic function is algorithmically verifiable but not algorithmically computable (see also this Wikipedia proof that no non-constant polynomial function

with integer coefficients exists that evaluates to a prime number for all integers

.).

Moreover, although the distribution of primes is a quantum phenomena with probabilty , it is easily seen (see Figs. 7-11 on pp.23-26 of this preprint) that the generation of the primes is algorithmically computable.

14. Why the universe may be algorithmically computable

By analogy, this suggests that although the measurable values of some individual properties of particles in the universe over time may represent a quantum phenomena, the universe itself may be algorithmically computable if the laws governing the generation of all the particles in the universe over time are algorithmically computable.

Notations, non-standard concepts, and definitions used commonly in these investigations are detailed in this post.)

1. Since, by the Prime Number Theorem, the number of primes is

, it would follow that determining a factor of

requires at least one logical operation for each prime

, and therefore cannot be done in polynomial time—whence

—IF whether or not a prime

divides an integer

were independent of whether or not a prime

divides the integer

.

2. Currently, conventional approaches to determining the computational complexity of Integer Factorising apparently appeal critically to the belief that:

(i) either—explicitly (see here)—that whether or not a prime divides an integer

is not independent of whether or not a prime

divides the integer

;

(ii) or—implicitly (since the problem is yet open)—that a proof to the contrary must imply that if is the probability that

is a prime, then

.

3. If so, then conventional approaches seem to conflate the two probabilities:

(i) The probability of selecting a number that has the property of being prime from a given set

of numbers;

Example 1: I have a bag containing numbers in which there are twice as many composites as primes. What is the probability that the first number you blindly pick from it is a prime. This is the basis for setting odds in games such as roulette.

(ii) The probability of determining that a given integer

is prime.

Example 2: I give you a -digit combination lock along with a

-digit number

. The lock only opens if you set the combination to a proper factor of

which is greater than

. What is the probability that the first combination you try will open the lock. This is the basis for RSA encryption, which provides the cryptosystem used by many banks for securing their communications.

4. In case 3(i), if the precise proportion of primes to non-primes in is definable, then clearly

too is definable.

However if is the set

of all integers, and we cannot define a precise ratio of primes to composites in

, but only an order of magnitude such as

, then equally obviously

cannot be defined in

(see Chapter 2, p.9, Theorem 2.1, here).

5. In case 3(ii) the following paper proves , since it shows that whether or not a prime

divides a given integer

is independent of whether or not a prime

divides

:

Why Integer Factorising cannot be polynomial time

Not only does it immediately follow that (see here), but we further have that

, with a binomial standard deviation. Hence, even though we cannot define the probability

of selecting a number from the set

of all natural numbers that has the property of being prime,

can be treated as the de facto probability that a given

is prime, with all its attended consequences for various prime-counting functions and the Riemann Zeta function (see here).

1 The Riemann Hypothesis (see also this paper) reflects the fact that all currently known approximations of the number of primes

are heuristic

All known approximations of the number of primes

are currently derived from real-valued functions that are asymptotic to

, such as

,

and Riemann’s function

, where the degree of approximation for finite values of

is determined only heuristically by conjecturing upon an error term in the asymptotic relation that can be `seen’, but not yet proven, to yield `good’ approximations of

(as described, for instance, by Barry Mazur and William Stein on p.36 of their absorbingly written, but without sacrificing rigour, entry-level book Prime Numbers and the Riemann Hypothesis).

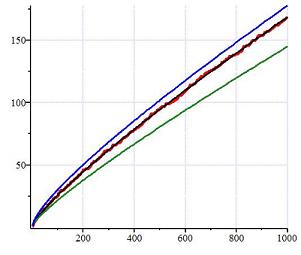

The above graph compares the actual number (red) of primes

with the distribution of primes as estimated variously by the functions

(blue),

(black), and

(green), where

is Riemann’s function

.

1A Two non-heuristic approximations of the number of primes

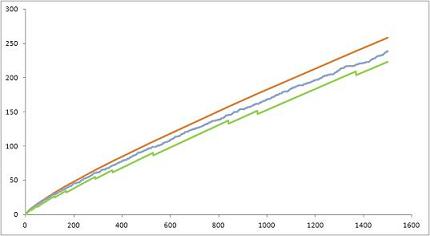

The following introduces, and compares, two non-heuristic prime counting functions (investigated more fully here) that, prima facie, yield non-heuristic approximations of :

(i) (green); and

(ii) (red);

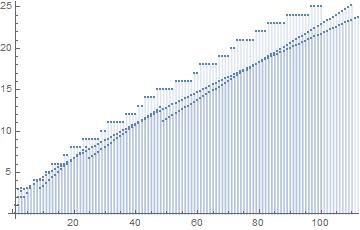

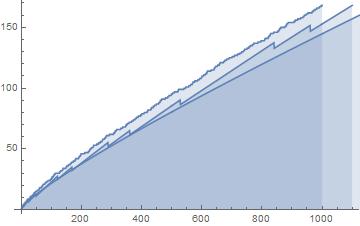

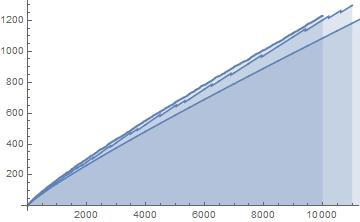

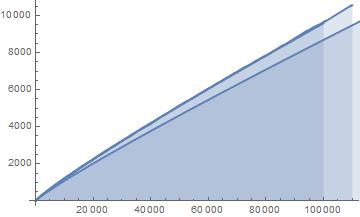

Based on manual and spreadsheet calculations, Figs.1 and 2 compare the values of the two non-heuristically estimated prime counting functions with the actual values of (blue) for

and

.

Fig.1: The above graph compares the non-heuristically estimated values of (green) and

(red) vs the actual values of

(blue) for

.

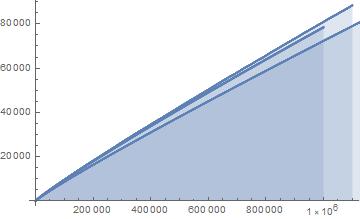

Fig.2: The above graph compares the non-heuristically estimated values of (green) and

(red) vs the actual values of

(blue) for

. Note that the gradient of both

and of

in the interval

is

.

1B Three intriguing queries

Since (by the Prime Number Theorem), whilst

(by Mertens’ Theorem, where

is the Euler-Mascheroni constant and

), and it can be shown (see Corollary 3.2 here) that

for some

, this raises the interesting queries (see also

2 of this initial investigation):

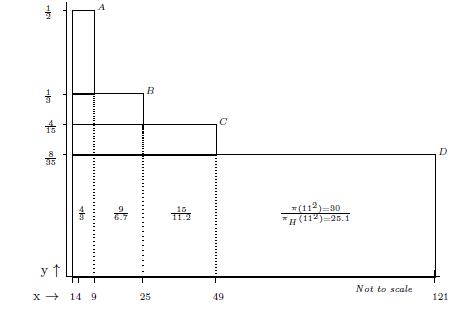

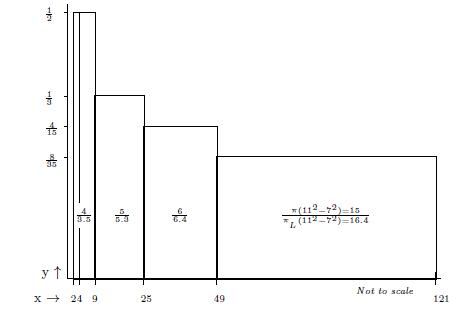

Fig.3. The overlapping rectangles represent

for

. Figures within each rectangle are the primes and estimated primes corresponding to the functions

and

, respectively, within the interval

for

.

Fig.4: Graph of . The rectangles represent

for

. Figures within each rectangle are the primes corresponding to the functions

and

within the interval

for

. The area under the curve is

.

(a) Which is the least , if any, such that

?

(b) Which is the largest , if any, such that

?

(c) Is an algorithmically verifiable, but not algorithmically computable (see Definitions 1 and 2 here), function which is discontinuous in the limit

(in the sense of Zeno’s paradox in 2-dimensions considered in Case 4 here)?

1C Three intriguing observations

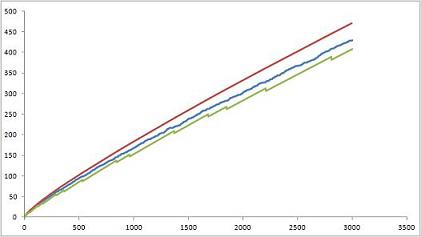

Fig.5: The above graph compares the actual values of in the range

, with

in the range

, and

in the range

.

Fig.6: The above graph compares the actual values of in the range

, with

in the range

, and

in the range

.

Fig.7: The above graph compares the actual values of in the range

, with

in the range

, and

in the range

.

Fig.8: The above graph compares the actual values of in the range

, with

in the range

, and

in the range

.

Fig.9: The above graph compares the actual values of in the range

, with

in the range

, and

in the range

.

Query (a) is answered by Figs.8-9, which show that the least such that

occurs somewhere around

.

As regards Query (b) however, we conjecture that Fig.9 suggests that there is no larger such that

.

Finally, we conjecture that—despite what is suggested by the Prime Number Theorem—Query (c) can be answered affirmatively if the three functions ,

, and

are as well-behaved as Fig.9 suggests!

2 Density of integers not divisible by primes

The above observations reflect the circumstance that (formal proofs for the following are detailed in Chapters 34 and 35 here):

Lemma 2.1: The asymptotic density of the set of all integers that are not divisible by any of a given set of primes is:

.

It follows that:

Lemma 2.2: The expected number of integers in any interval that are not divisible by any of a given set of primes

is:

.

2A The function

In particular, the expected number of integers

that are not divisible by any of the first

primes

is:

Corollary 2.3:

It follows that:

Corollary 2.4: The expected number of primes is:

We conclude that is a non-heuristic approximation of the number of primes

:

Lemma 2.5: .

2B The function

We note similarly that:

Corollary 2.6: The expected number of primes in the interval () is:

.

It further follows from Lemma 2.2 and Corollary 2.6 that:

Corollary 2.7: The number of primes less than

is cumulatively approximated by:

.

We conclude that is also a cumulative non-heuristic approximation of the number of primes

:

Lemma 2.8: .

2C An apparent paradox

We note that since the above non-heuristic estimates appeal to an asymptotic density, and the density of primes tends to as

, Figs.1-9 suggest that:

(i) overestimates

cumulatively over each of the intervals; whilst

(ii) overestimates

over a single cumulative interval.

even though Fig.3 suggests that:

The number of primes in any interval

for any given

is constant as

; but

The contribution of the expected number of primes in the interval

to the total expected number (see Corollary 2.4),

, of primes in the interval

decreases monotonically.

In other words, an apparent paradox surfaces (as suggested by Fig.9) when we express and the function

in terms of the number of primes determined by each function respectively in each interval

as follows:

Since as

, we can, for any given

, define

such that:

We conclude that:

Theorem For any given , we can find

such that the estimated number

of primes

is always less than the actual number of primes less than

plus the number of primes between

and

as estimated by

.

Since (by the Prime Number Theorem), whilst

(where

), and

for all

(see Figs.3 and 4), we note the apparent paradox:

Corollary The Prime Number Theorem implies that:

3 Conventional estimates of for finite

are heuristic

We note that Guy Robin (Robin, G. (1983). “Sur l’ordre maximum de la fonction somme des diviseurs”. Séminaire Delange-Pisot-Poitou, Théorie des nombres (1981-1982). Progress in Mathematics. 38: 233-244.) proved (implicit assumptions?) that the following changes sign infinitely often:

Robin’s result is analogous to Littlewood’s curious theorem (implicit assumptions?) that the difference changes sign infinitely often. No analogue of the Skewes number (an upper bound on the first natural number

for which

) is, however, known for Robin’s result.

Littlewood’s theorem is `curious’ since:

(a) There is no explicitly defined arithmetical formula that, for any , will yield

. Hence, Littlewood’s proof deduces the behaviour of

for finite values of

by implicitly appealing to the relation of

to

, defined as

over all integers

, through the identity of the infinite summation with the Euler product

over all primes, which is valid only for Re

; as is its consequence (which involves the re-arrangement of an infinite summation that, too, is valid only for Re

):

(b) Littlewood’s proof deduces the behaviour of for finite values of

by (uncritically, as I argue here?) appealing to the analytically continued behaviour of

in areas where

is not defined!

Moreover, we note that—unlike and

—conventional estimates of

for finite values of

can be treated as heuristic, since they appeal only to the limiting behaviour (Theorem 420, p.345, Hardy and Wright, Introduction to the Theory of Numbers, 4th ed, 1960, Oxford University Press) of a formally (i.e., explicitly) undefined arithmetical function,

, to the limiting behaviours of formally defined arithmetical functions

and

:

where:

and, as detailed here, the latter is curiously stipulated as valid only for , but definable in terms of a summation over the zeros of the zeta function in the critical strip

:

“ is given by the so-called explicit formula

for and

not a prime or prime power, and the sum is over all nontrivial zeros

of the Riemann zeta function

, i.e., those in the critical strip so

, and interprets as

.”

(Notations, non-standard concepts, and definitions used commonly in these investigations are detailed in this post.)

Are there still unsolved problems about the numbers

In a 2005 Clay Math Institute invited large lecture to a popular audience at MIT ‘Are there still unsolved problems about the numbers ’, co-author with William Stein of Prime Numbers and the Riemann Hypothesis, and Gerhard Gade Harvard University Professor, Barry Mazur remarked that (p.6):

“If I give you a number , say

= one million, and ask you for the first number after

that is prime, is there a method that answers that question without, in some form or other, running through each of the successive numbers after

rejecting the nonprimes until the first prime is encountered? Answer: unknown.”

Although this may represent conventional wisdom, it is not, however, strictly true!

Reason: The following 1964 Theorem yields two algorithms (Trim/Compact), each of which affirmatively answers the questions:

(i) Do we necessarily discover the primes in a Platonic domain of the natural numbers, as is suggested by the sieve of Eratosthenes, or can we also generate them sequentially in a finitarily definable domain that builds into them the property of primality?

(ii) In other words, given the first primes, can we generate the prime

algorithmically, without testing any number larger than

for primality?

I: A PRIME NUMBER GENERATING THEOREM

Theorem: For all , let

denote the

'th prime, and define

such that:

,

and:

.

Let be such that, for all

, there is some

such that:

.

Then:

.

Proof: For all , there is some

such that:

.

Since :

.

Hence:

,

and so it is the prime .

II: TRIM NUMBERS

The significance of the Prime Number Generating Theorem is seen in the following algorithm.

We define Trim numbers recursively by , and

, where

is the

‘th prime and:

(1) , and

is the only element in the

nd array;

(2) is the smallest even integer that does not occur in the

‘th array

;

(3) is selected so that:

for all

.

It follows that the Trim number is, thus, a prime unless all its prime divisors are less than

.

The Trim Number Algorithm

n

1 2 2 3 5 7 11 13 17 19 23 27 29 31 37

2 3 1 5

3 5 1 1 7

4 7 1 2 3 11

5 11 1 1 4 3 13

6 13 1 2 2 1 9 17

7 17 1 1 3 4 5 9 19

8 19 1 2 1 2 3 7 15 23

9 23 1 1 2 5 10 3 11 15 27

10 27 1 0 3 1 6 12 7 11 19 29

11 29 1 1 1 6 4 10 5 9 17 31

n

…

NOTE: The first Trim Numbers upto

consist of the first

primes and the

composites:

(i) Trim composite :

(ii) Trim composite :

(iii) Trim composite :

(iv) Trim composite :

Theorem: For all

II A: -TRIM NUMBERS

For any given natural number , we define

-Trim numbers recursively by

, and

, where

is the

‘th prime and:

(1) , and

is the only element in the

nd array;

(2) is the smallest even integer that does not occur in the

‘th array

;

(3) is selected so that:

for all

.

It follows that the -Trim number

is, thus, not divisible by any prime

unless the prime is smaller than

.

III: COMPACT NUMBERS

Compact numbers are defined recursively by , and

, where

is the

‘th prime and:

(1) , and

is the only element in the

nd array;

(2) is the smallest even integer that does not occur in the nth array

;

(3) is selected so that:

for all

;

(4) is selected so that:

;

(5) if

.

It follows that the compact number is either a prime, or a prime square, unless all, except a maximum of

, prime divisors of the number are less than

.

The Compact Number Algorithm

n

1 2 2 3 5 7 11 13 17 19 23 29 31 37 41

2 3 1 5

3 5 1 7

4 7 1 9

5 9 1 0 11

6 11 1 1 13

7 13 1 2 17

8 17 1 1 19

9 19 1 2 23

10 23 1 1 25

11 25 1 2 0 29

12 29 1 1 1 31

13 31 1 2 4 37

14 37 1 2 3 41

15 41 1 1 4 43

16 43 1 2 2 47

17 47 1 1 3 49

18 49 1 2 1 0 53

19 53 1 1 2 3 57

20 57 1 0 3 6 59

21 59 1 1 1 4 61

22 61 1 2 4 2 67

23 67 1 2 3 3 71

24 71 1 1 4 6 73

25 73 1 2 2 4 79

26 79 1 2 1 5 83

27 83 1 1 2 1 87

28 87 1 0 3 4 89

29 89 1 1 1 2 93

30 93 1 0 2 5 97

31 97 1 2 3 1 101

32 101 1 1 4 4 103

33 103 1 2 2 2 107

34 107 1 1 3 5 109

35 109 1 2 1 3 113

36 113 1 1 2 6 117

37 117 1 0 3 2 121

38 121 1 2 4 5 0 127

39 127 1 2 3 6 5 131

40 131 1 1 4 2 1 137

41 137 1 1 3 3 6 139

42 139 1 2 1 1 4 145

43 145 1 2 0 2 9 149

44 149 1 1 1 5 5 151

45 151 1 2 4 5 0 157

46 157 1 2 3 4 8 163

47 163 1 2 2 5 2 167

48 167 1 1 3 1 9 169

49 169 1 2 1 6 7 0 173

50 173 1 1 2 2 3 9 177

51 177 1 0 3 5 10 5 179

52 179 1 1 1 3 8 3 181

53 181 1 2 4 1 6 1 189

54 189 1 0 1 0 9 6 191

55 191 1 1 4 5 7 4 193

56 193 1 2 2 3 5 2 197

57 197 1 1 3 6 1 11 199

58 199 1 2 1 4 10 9 205

59 205 1 2 0 5 4 3 211

60 211 1 2 4 6 9 10 219

61 219 1 0 1 5 1 2 223

62 223 1 2 2 1 8 11 227

63 227 1 1 3 4 4 7 229

64 229 1 2 1 2 2 5 233

65 233 1 1 2 5 9 14 237

66 237 1 0 3 1 5 10 239

67 239 1 1 1 6 3 8 241

68 241 1 2 4 4 1 6 249

69 249 1 0 1 3 4 11 251

70 251 1 1 4 1 2 9 257

71 257 1 1 3 2 7 3 261

72 261 1 0 4 5 3 12 263

73 263 1 1 2 3 1 10 267

74 267 1 0 3 6 8 6 269

75 269 1 1 1 4 6 4 271

76 271 1 2 4 2 4 2 277

77 277 1 2 3 3 9 9 281

78 281 1 1 4 6 5 5 283

79 283 1 2 2 4 3 3 289

n

…

NOTE: The first Compact Numbers upto

consist of the first

primes,

prime squares, and

composites.

Theorem 1: There is always a Compact Number such that

.

Theorem 2: For sufficiently large .

A 2-dimensional Eratosthenes sieve

A later investigation (see also this post) shows why the usual, linearly displayed, Eratosthenes sieve argument reveals the structure of divisibility (and, ipso facto, of primality) more transparently when displayed as the 1964, -dimensional matrix, representation of the residues

, defined for all

and all

by:

, where

.

‘Density‘: For instance, the residues can be defined for all

as the values of the non-terminating sequences

, defined for all

(as illustrated below in Fig.1).

Fig.1

Sequence

…

n=1 1 2 3 4 5 6 7 8 9 10 … n-1

n=2 0 1 2 3 4 5 6 7 8 9 … n-2

n=3 0 1 1 2 3 4 5 6 7 8 … n-3

n=4 0 0 2 1 2 3 4 5 6 7 … n-4

n=5 0 1 1 3 1 2 3 4 5 6 … n-5

n=6 0 0 0 2 4 1 2 3 4 5 … n-6

n=7 0 1 2 1 3 5 1 2 3 4 … n-7

n=8 0 0 1 0 2 4 6 1 2 3 … n-8

n=9 0 1 0 3 1 3 5 7 1 2 … n-9

n=10 0 0 2 2 0 2 4 6 8 1 … n-10

n=11 0 1 1 1 4 1 3 5 7 9 … n-11

n

…

We note that:

For any

, the non-terminating sequence

cycles through the values

with period

;

For any

the ‘density’—over the set of natural numbers—of the set

of integers that are divisible by

is

; and the ‘density’ of integers that are not divisible by

is

.

Primality: The residues can be alternatively defined for all

as values of the non-terminating sequences,

, defined for all

(as illustrated below in Fig.2).

Fig.2

Sequence

…

1 2 3 4 5 6 7 8 9 10 … n-1

0

1 2 3 4 5 6 7 8 9 … n-2

0 1

1 2 3 4 5 6 7 8 … n-3

0 0 2

1 2 3 4 5 6 7 … n-4

0 1 1 3

1 2 3 4 5 6 … n-5

0 0 0 2 4

1 2 3 4 5 … n-6

0 1 2 1 3 5

1 2 3 4 … n-7

0 0 1 0 2 4 6

1 2 3 … n-8

0 1 0 3 1 3 5 7

1 2 … n-9

0 0 2 2 0 2 4 6 8

1 … n-10

0 1 1 1 4 1 3 5 7 9

… n-11

…

We note that:

The non-terminating sequences

highlighted in bold correspond to a prime

(since

for any

) in the usual, linearly displayed, Eratosthenes sieve:

The non-terminating sequences

highlighted in italics identify a crossed out composite

(since

for some

) in the usual, linearly displayed, Eratosthenes sieve.

The significance of expressing Eratosthenes sieve as a -dimensional matrix

Fig.1 illustrates that although the probability of selecting a number that has the property of being prime from a given set

of numbers is definable if the precise proportion of primes to non-primes in

is definable, if

is the set

of all integers, and we cannot define a precise ratio of primes to composites in

, but only an order of magnitude such as

, then equally obviously

cannot be defined in

(see Chapter 2, p.9, Theorem 2.1, here).

The probability of determining a proper factor of a given number

Fig.2 illustrates, however, that the probability of determining a proper factor of a given number

is

, since this paper shows that whether or not a prime

divides a given integer

is independent of whether or not a prime

divides

.

We thus have that .

The putative non-heuristic probability that a given is a prime

Hence, even though we cannot define the probability of selecting a number from the set

of all natural numbers that has the property of being prime,

can be treated as the putative non-heuristic probability that a given

is a prime.

“What sort of Hypothesis is the Riemann Hypothesis?”

The significance of the above perspective is that (see this investigation) it admits non-heuristic approximations of prime counting functions (see Fig.15 of the investigation) where:

“It has long been known that that for any real number the number of prime numbers less than

(denoted

is approximately

in the sense that the ratio

tends to

as

goes to infinity. The Riemann Hypothesis would give us a much more accurate “count” for

in that it will offer a specific smooth function

(hardly any more difficult to describe than

) and then conjecture that

is an essentially square root accurate approximation to

; i.e., for any given exponent greater than

(you choose it:

, for example) and for large enough

where the phrase “large enough” depends on your choice of exponent the error term i.e., the difference between

and the number of primes less than

in absolute value is less than

raised to that exponent (e.g.

, etc.)"

… Barry Mazur and William Stein, ‘What is Riemann’s Hypothesis‘

This argument laid the foundation for this investigation. See also this arXiv preprint, and this broader update.

Abstract We define the residues for all

and all

such that

if, and only if,

is a divisor of

. We then show that the joint probability

of two unequal primes

dividing any integer

is the product

. We conclude that the prime divisors of any integer

are independent; and that the probability

of

being a prime

is

. The number of primes less than or equal to

is thus given by

. We further show that

, and conclude that

does not oscillate.

The residues

We begin by defining the residues for all

and all

as below:

Definition 1: where

.

Since each residue cycles over the

values

, these values are all incongruent and form a complete system of residues [1]

.

It immediately follows that:

Lemma 1: if, and only if,

is a divisor of

.

The probability

By the standard definition of the probability of an event

, we conclude that:

Lemma 2: For any and any given integer

, the probability

that

is

, and the probability

that

is

.

We note the standard definition:

Definition 2: Two events and

are mutually independent for

if, and only if,

.

The prime divisors of any integer

are mutually independent

We then have that:

Lemma 3: If and

are two primes where

then, for any

, we have:

where and

.

Proof: The numbers

, where

and

, are all incongruent and form a complete system of residues [2]

. Hence:

.

By Lemma 2:

.

The lemma follows.

If and

in Lemma 3, so that both

and

are prime divisors of

, we conclude by Definition 2 that:

Corollary 1: .

Corollary 2: .

Theorem 1: The prime divisors of any integer are mutually independent. [3]

The probability that

is a prime

Since is a prime if, and only if, it is not divisible by any prime

, it follows immediately from Lemma 2 and Lemma 3 that:

Lemma 4: For any , the probability

of an integer

being a prime

is the probability that

for any

if

.

Lemma 5: [4].

The Prime Number Theorem

The number of primes less than or equal to is thus given by:

Lemma 6: .

This now yields the Prime Number Theorem:

Theorem 2: .

Proof: From Lemma 6 and Mertens’ Theorem that

[5]:

it follows that:

The behaviour of as

is then seen by differentiating the right hand side, where we note that

:

Hence does not oscillate as

.

Acknowledgements

I am indebted to my erstwhile classmate, Professor Chetan Mehta, for his unqualified encouragement and support for my scholarly pursuits over the past fifty years; most pertinently for his patiently critical insight into the required rigour without which the argument of this 1964 investigation would have remained in the informal universe of seemingly self-evident truths.

References

HW60 G. H. Hardy and E. M. Wright. 1960. An Introduction to the Theory of Numbers 4th edition. Clarendon Press, Oxford.

Ti51 E. C. Titchmarsh. 1951. The Theory of the Riemann Zeta-Function. Clarendon Press, Oxford.

Notes

Return to 1: HW60, p.49.

Return to 2: HW60, p.52, Theorem 59.

Return to 3: In the previous post we have shown how it immediately follows from Theorem 1 that integer factorising is necessarily of order ; from which we conclude that integer factorising cannot be in the class

of polynomial-time algorithms.

Return to 4: By HW60, p.351, Theorem 429, Mertens’ Theorem.

Return to 5: By the argument in Ti51, p.59, eqn.(3.15.2).

Return to 6: HW60, p.9, Theorem 7.

Recent comments